A groundbreaking new study casts doubt on OpenAI’s claims regarding fair use, suggesting that its flagship AI models—including GPT-4 and GPT-3.5—may have memorized copyrighted material during training.

The research, conducted by teams from the University of Washington, University of Copenhagen, and Stanford University, provides compelling evidence that these models can reproduce high-surprisal words—unique or uncommon terms—from copyrighted works such as fiction books and news articles, potentially signaling verbatim memorization.

What the Study Found: Memorization via High-Surprisal Words

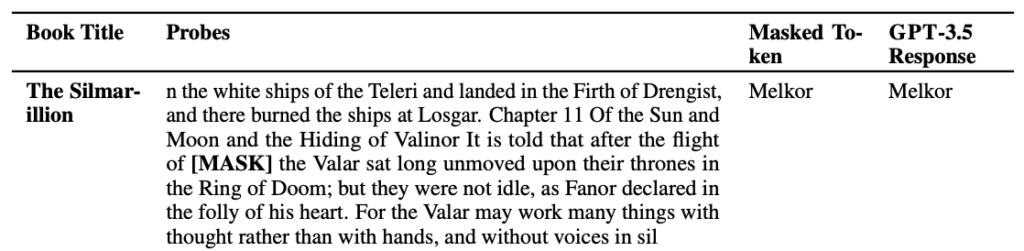

To uncover this behavior, the researchers developed a novel testing method using “high-surprisal” words — statistically rare terms within a given context. For instance, in the sentence “Jack and I sat perfectly still with the radar humming,” the word “radar” is considered high-surprisal.

By removing such words from snippets taken from fiction books and New York Times articles, then prompting OpenAI models to guess the missing words, the researchers measured how often models guessed correctly — a sign they may have memorized those passages during training.

The findings were clear:

- GPT-4 displayed signs of memorizing content from BookMIA, a dataset known to include copyrighted ebooks.

- New York Times content also showed signs of memorization, albeit at a lower frequency.

This technique allowed researchers to effectively audit black-box models like those served via OpenAI’s API — a key advancement in transparency and accountability.

Legal and Ethical Ramifications for OpenAI

These revelations come at a time when OpenAI is facing multiple lawsuits from authors, coders, and other rights-holders accusing the company of unauthorized use of copyrighted content to train its models.

OpenAI maintains that its model training falls under “fair use” protections, though critics and plaintiffs argue that U.S. copyright law does not explicitly allow AI training exemptions. This study strengthens the plaintiffs’ case by offering empirical evidence that OpenAI’s models are not merely learning patterns but reproducing actual snippets of protected content.

Researcher Calls for Greater Transparency

Abhilasha Ravichander, a PhD candidate at the University of Washington and co-author of the paper, emphasized the need for greater data transparency in the AI space.

“In order to have large language models that are trustworthy, we need to have models that we can probe and audit,” she said. “Our work aims to provide a tool to do that—but it also underscores the need for more openness about training data.”

OpenAI’s Current Stance

While OpenAI has secured licensing deals with publishers and offers opt-out tools for content creators, it continues to lobby governments for more lenient rules on AI training practices. The company believes that training AI on publicly available content should fall under a broader interpretation of fair use.

Nonetheless, as lawsuits and academic scrutiny mount, the pressure is increasing on OpenAI to disclose more about its training data sources—or risk losing the trust of creators, regulators, and the public.

Get the Latest AI News on AI Content Minds Blog